Toxic Bot Content on TikTok in the Netherlands: What Q1 2026 Reveals

Over the first quarter of 2026, we analysed tens of thousands of toxic bot content on TikTok to better understand the nature of toxic bot-driven content in the Netherlands.

While the overall levels of toxicity remain relatively low, a closer look reveals consistent patterns in narratives, themes, and amplification strategies, particularly around politics, conspiracy theories, and exclusionary rhetoric.

January – March, 2026

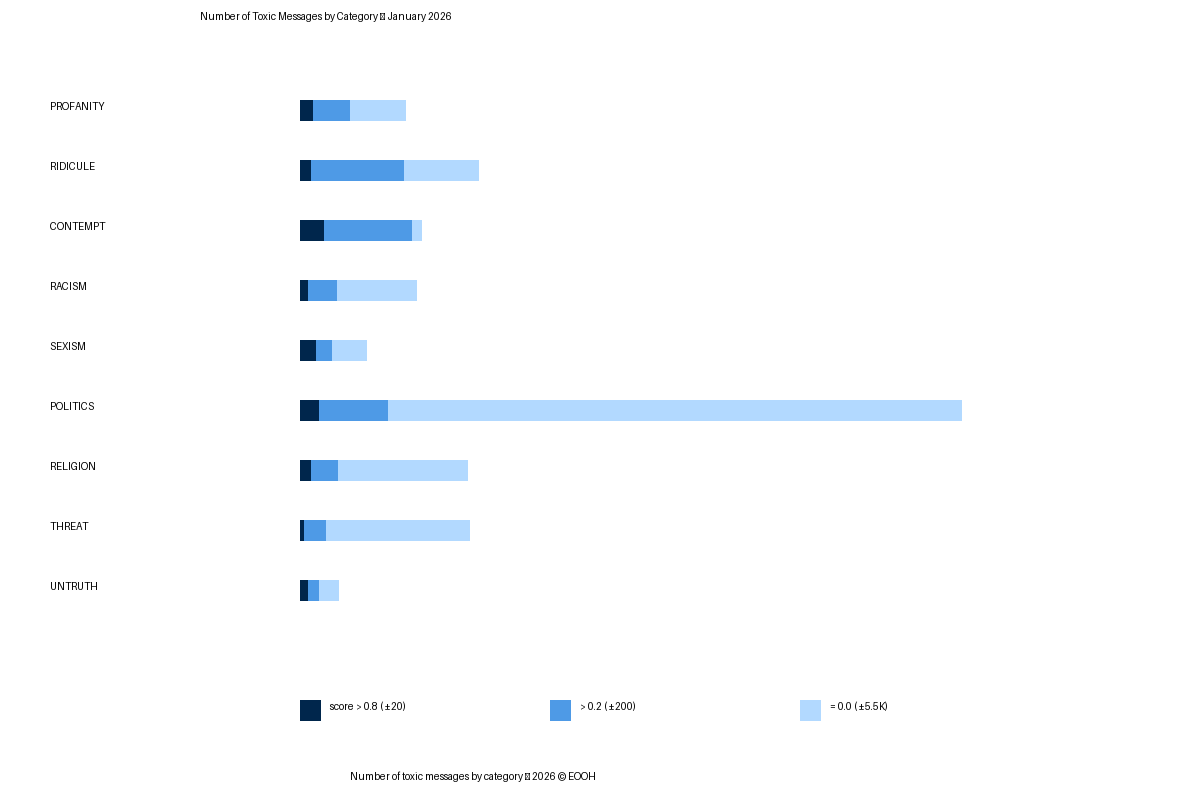

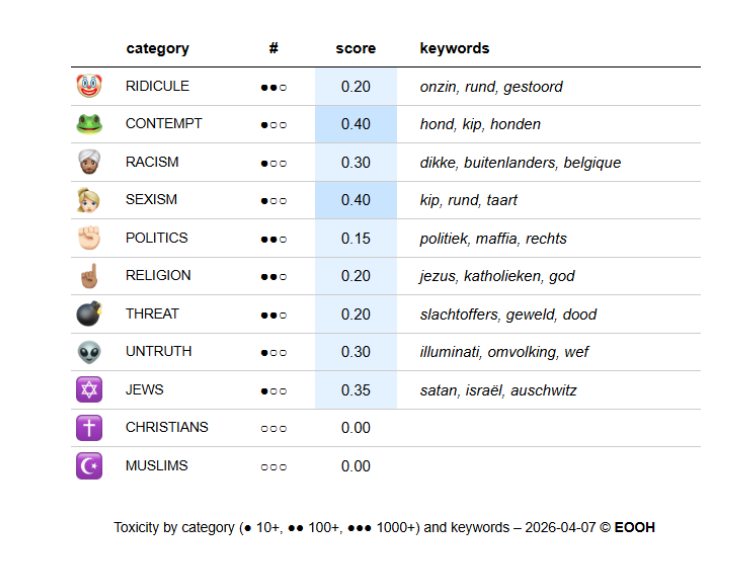

In January, toxic bot content is overwhelmingly political in nature. Much of the discourse centers around polarizing political themes, with frequent references to conspiratorial and antisemitic narratives. Keywords such as “zionisten” (zionists) and “illuminati” appear regularly, and the most common thematic combination is politics paired with hatred toward Jewish communities.

Highly toxic content in this period is primarily categorized as contempt, often expressed through dismissive or demeaning language. Disinformation is also present, with recurring references to conspiracy theories such as “omvolking” (repopulation, the word is typically used in Dutch in connection to the Great Replacement conspiracy theory) and global elite narratives linked to the “WEF.”

The word cloud reinforces this political focus, with terms like “politiek” (political) dominating, alongside religious references such as “Jezus” and “Allah,” and mentions of Islam.

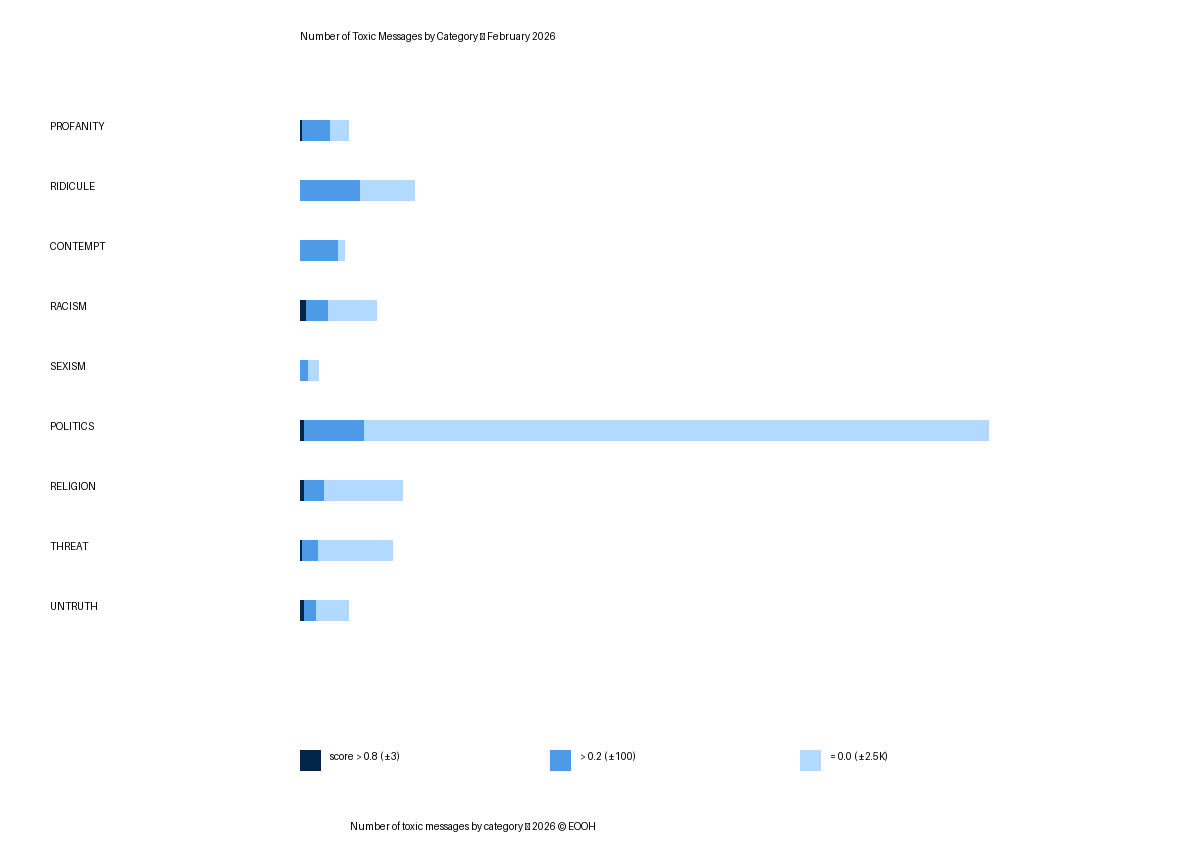

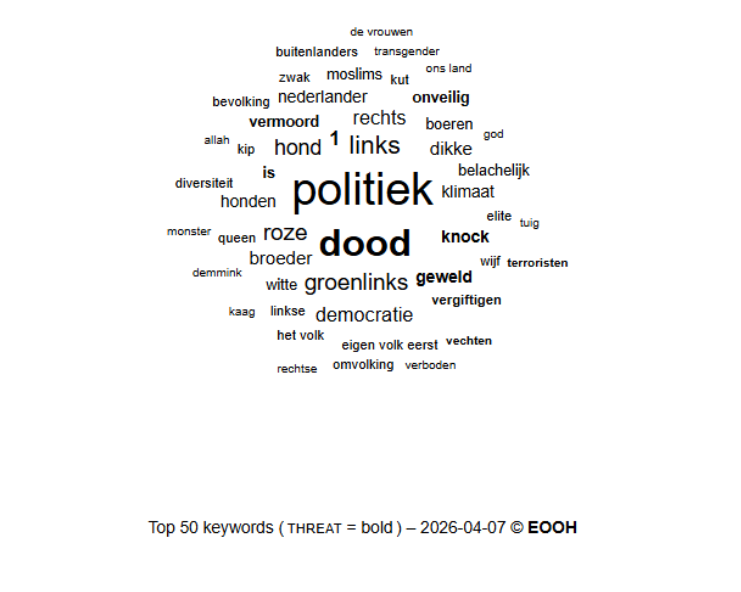

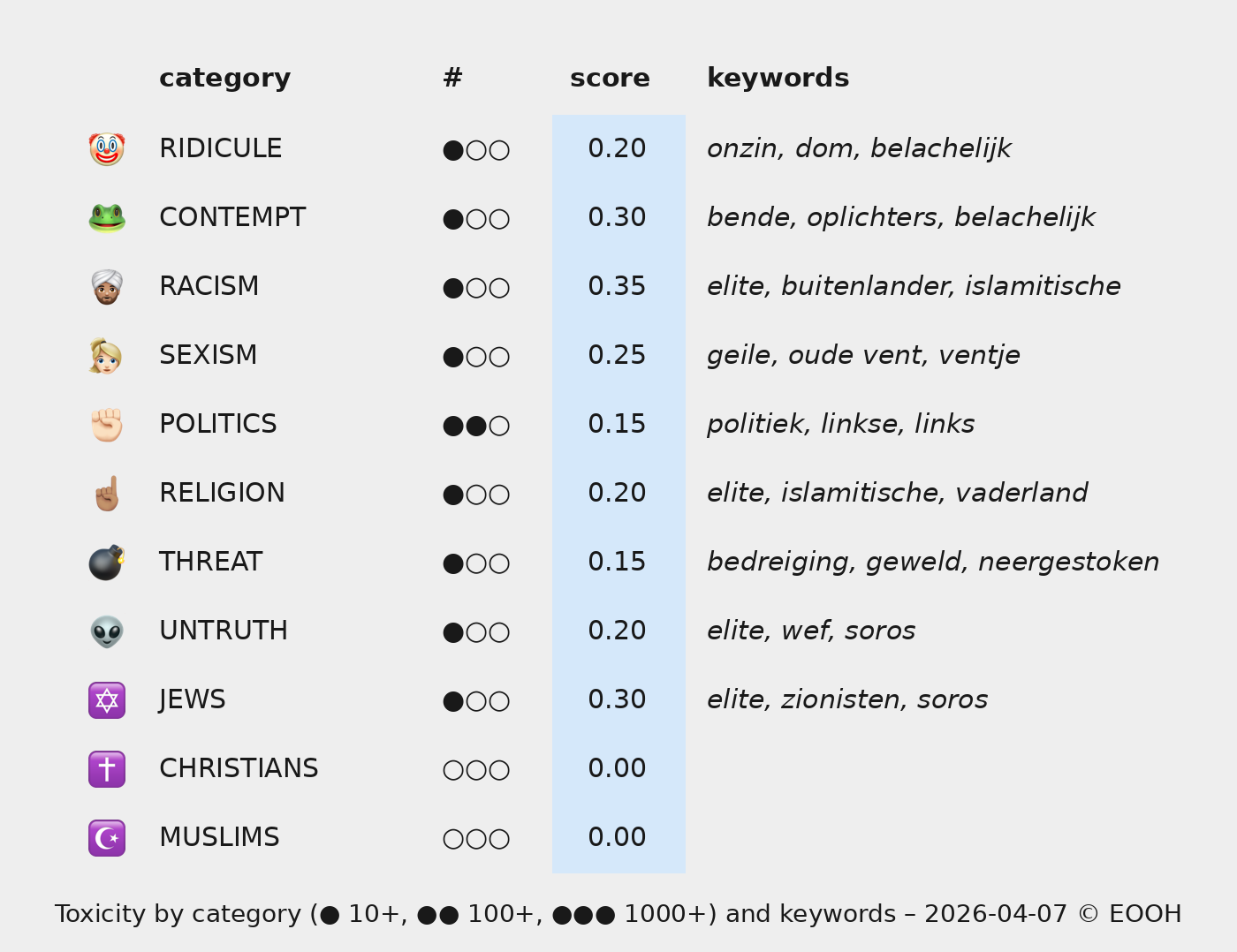

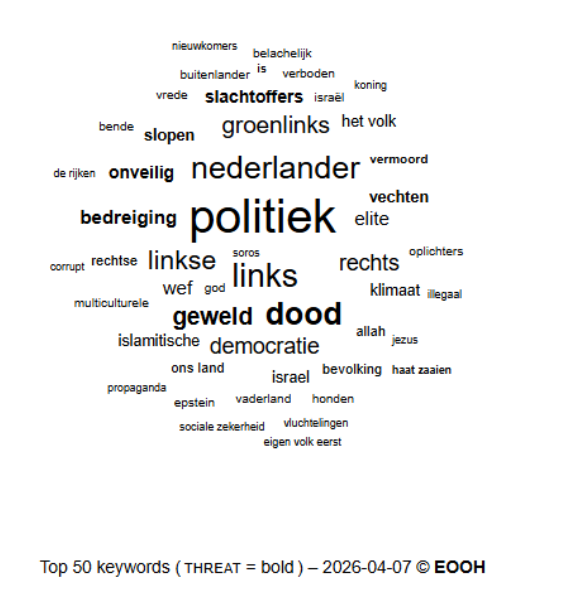

In February, the political nature of toxic bot content is equally present. Word cloud analysis highlights terms such as “politiek” (political), “links” (left,) “GroenLinks” (Green Party), and “democratie” (democracy), confirming that much of the activity is embedded in political discourse.

Two notable trends emerge. First, there is a stronger intersection between religion and racism, particularly targeting Muslims, with frequent use of a term like “islamitische” (Islamic). Second, antisemitic narratives persist, often intertwined with disinformation and conspiracy theories involving figures such as George Soros, the “WEF,” and vague references to “elites.”

A particularly striking element is the appearance of the phrase “eigen volk eerst” (“our own people first”), reflecting the normalization of nationalist and exclusionary rhetoric within online discourse.

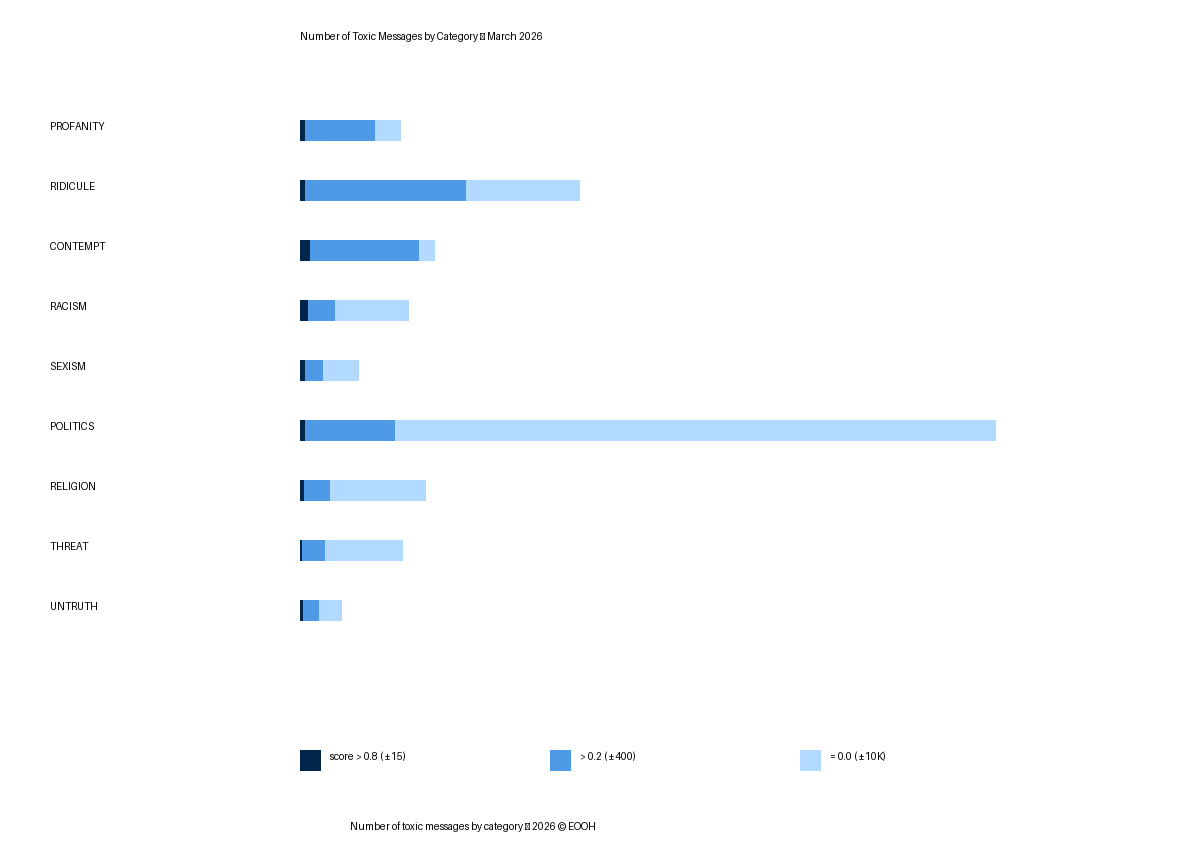

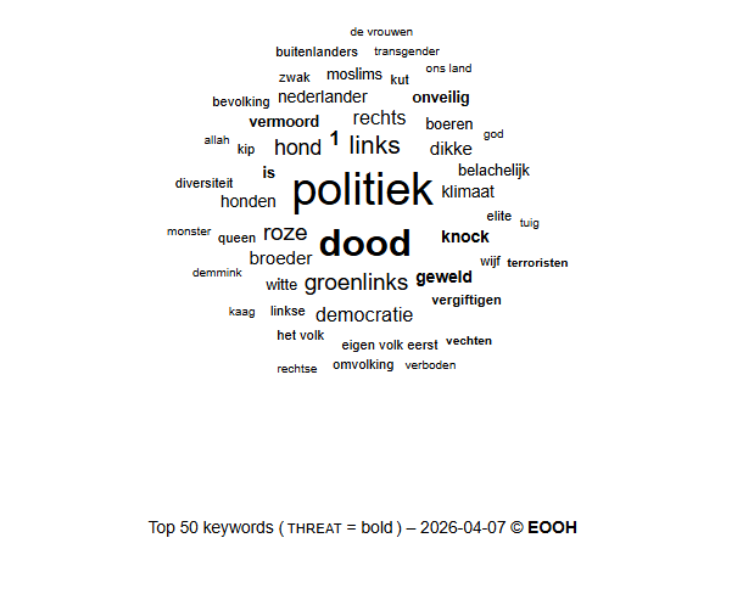

Political content continues to dominate, with recurring use of phrases such as “eigen volk eerst” (first our people) and “omvolking,” (Great Replacement) reinforcing the presence of Great Replacement narratives. Rather than introducing new themes, March appears to amplify and normalize existing ones at scale.

Overview from January to March: Number of Toxic Messages by Category

Data collected ©EOOHFirst Quarter 2026

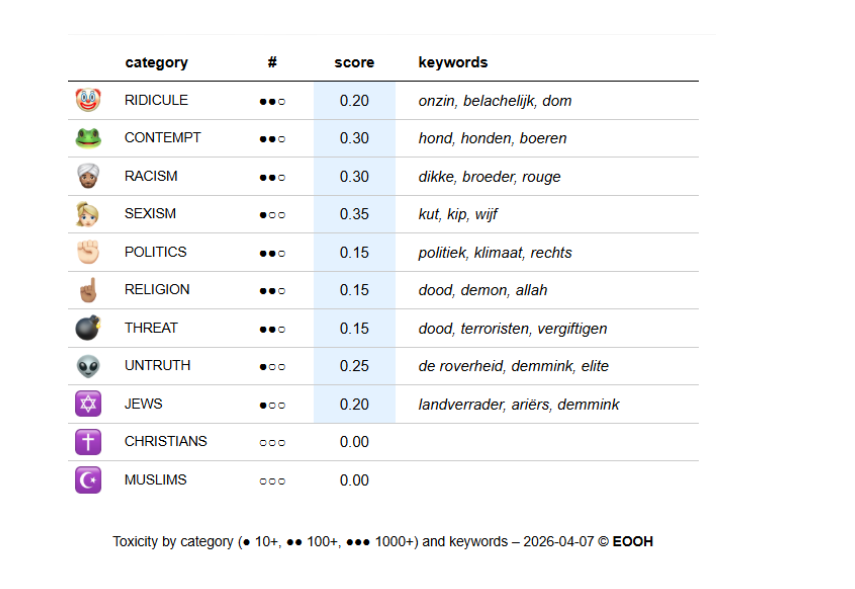

Looking at the full Q1 dataset (34,036 messages), several clear patterns emerge.

Across all three months, political discourse is the primary vehicle for toxic bot content. Frequent use of terms such as “politiek” (politics), “rechts” (right), “links” (left), and “GroenLinks” (Green Party) suggests that these messages are not random but instead aimed at shaping or influencing public debate.

While average toxicity scores remain relatively low (0.20 in January, 0.15 in February and March), the scale of content, particularly in March, means that exposure is significant. Toxicity is therefore less about extreme cases and more about persistent, normalized hostility.

The highest toxicity scores are associated with sexism and contempt, often expressed through insults, ridicule, and demeaning language.

Antisemitic narratives are present throughout the entire period and are frequently combined with conspiracy theories involving global elites, financial control, and institutions. These narratives are not isolated but form a recurring backbone of toxic discourse.

There is also a consistent presence of nationalist and exclusionary rhetoric. Terms such as “omvolking” (Great Replacement), “eigen volk eerst” (first our people), “buitenlanders” (foreigners), and “multiculturele samenleving” (multicultural society) appear alongside references to Islam and Muslims, indicating a persistent focus on identity-based polarization.

Although relatively low in percentage (around 1–2%), both threatening language and disinformation are consistently present across all months. This suggests that while not dominant, these elements are structurally embedded in the ecosystem.

Activity spikes, particularly in mid-March, indicate that bot-driven toxicity is likely reactive and coordinated, responding to specific events or moments in public debate. In other words, the more posts appear, the more increased bot account activity appears as well and points to automated behaviour to increase interaction.

The findings from Q1 2026 point to a toxic content ecosystem on TikTok that is less about extreme hate and more about sustained, low-level hostility at scale. Political discourse acts as the primary entry point, with antisemitic, conspiratorial, and xenophobic narratives consistently woven into the conversation.

This aligns with broader research on online hate speech in the Netherlands: harmful narratives are not only spread through overtly illegal content, but also through the normalization of contempt, repetition of conspiracy theories, and strategic amplification during key political moments.

More information

The data gathered for our research specifically comes from TikTok with the help of third-party data sources by tracking specific keywords (ranging from Dutch locally relevant themes like ‘AZC’ (asylum seeking centre) to local Dutch municipalities. Due to the hyper local yet generally neutral keywords used, most of the data shows little to no toxicity. Therefore, a specific toxicity analysis is applied on top of the ‘bot likelihood’ score.